problem definition & forward pass

We aim to compute the gradients for the loss function . The network consists of an input , a hidden layer , and an output .

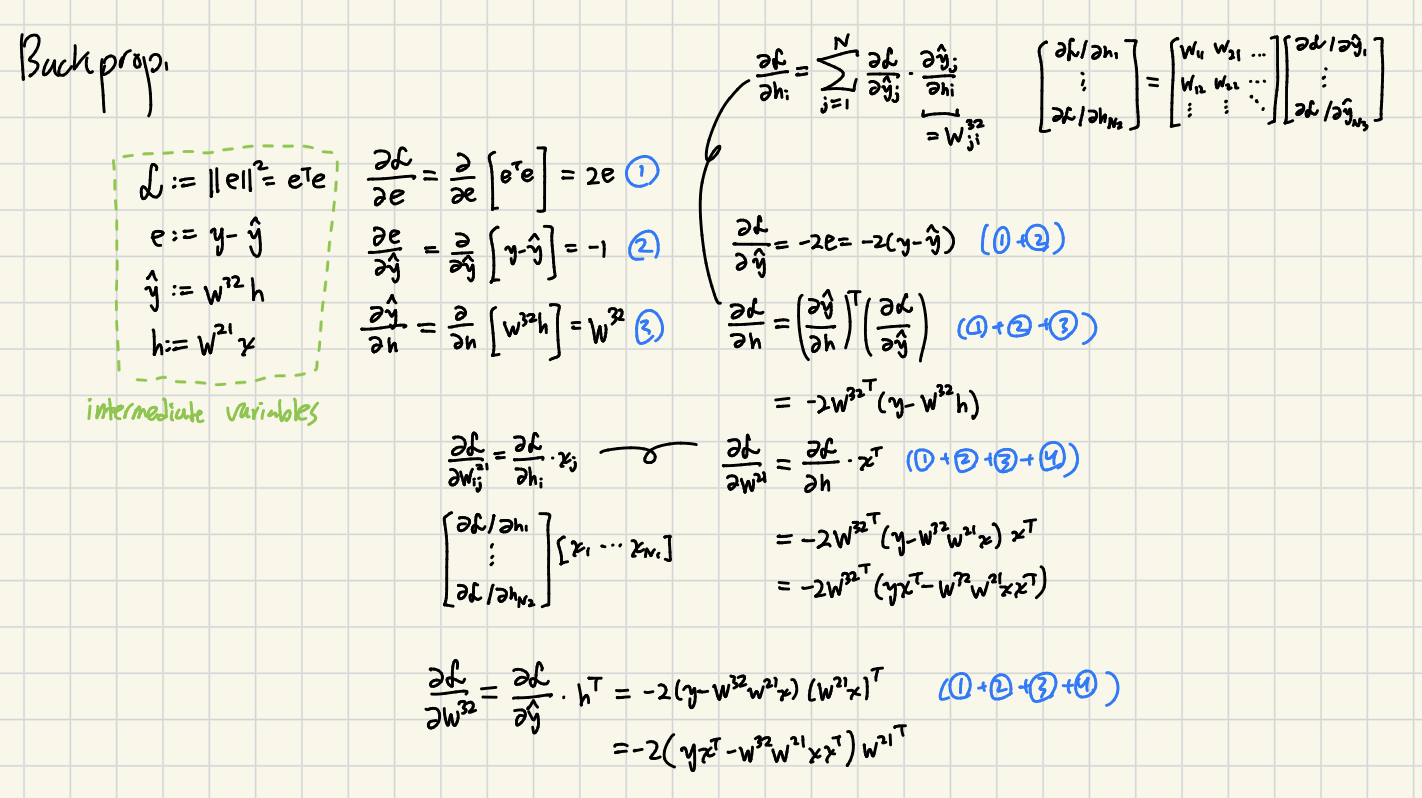

We define the following intermediate variables for the forward pass:

- loss:

- error:

- output:

- hidden state:

intermediate derivatives (chain rule)

To perform backpropagation, we first compute the partial derivatives for each step of the computational graph.

- derivative of loss w.r.t. error:

- derivative of error w.r.t. output:

(Note: In matrix calculus context, this represents )

- derivative of output w.r.t. hidden state:

backpropagation derivation

Gradient w.r.t output (): combining Equations (1) and (2) via the chain rule,

Gradient w.r.t Hidden Layer (): combining the previous result with Equation (3),

Substituting the known terms:

parameter gradients

(a) Gradient w.r.t Inner Weights ()

The gradient for the first layer weights is derived using the gradient of the hidden layer and the input :

Substitute :

Expand :

Distribute :

(b) Gradient w.r.t Outer Weights ()

The gradient for the second layer weights is derived using the gradient of the output and the hidden state :

Substitute and :

Apply transpose rule :

Distribute and factor out :

disclaimer: this note was mostly transcribed by Gemini